The Mystery of Voting Integrity's Missing Data

Their "Data Cleaning" methodology is the real Anomaly

One important thing I haven’t touched on specifically in the previous discussions is the big discrepancy in number of vote updates between Voting Integrity’s dataset and the the data they charted. Voter Integrity’s dataset contains 9,609 vote updates or observations, while their charts only contain 8,954 data points. This represents a selective thinning of 655 updates, or selective removal of about 10% of the original data. When I asked Voter Integrity about this missing data I received the following oblique answer:

“Regarding the vote updates which have the totals dropping to zero, we removed those *before* computing successive vote differences. These are almost certainly either data errors themselves or reflect a bizarre way of issuing corrections. Data-cleaning is a very common and normal part of data analysis and shouldn't be regarded as suspicious. The appendix linked to in this report provides the entirety of the code and data used for this report and you can see exactly what we did if you look there.”

In real life, data scientists are pretty circumspect and meticulous about the methodology used to clean their data, because the act of “cleaning” the data, by its very nature, removes anomalies and changes the results of the data analysis. But looking through Voter Integrity’s Jupyter notebook and code, there’s really no documentation on how they decided to remove the most troublesome 655 updates that might have affect their graphs.

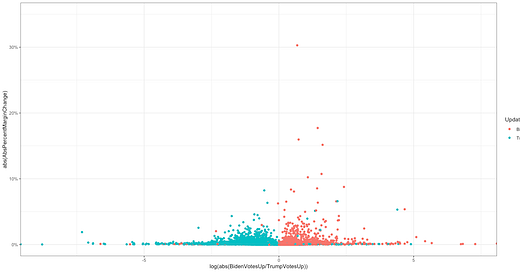

Just for fun, I’m going to post a similar graph to the one Voter Integrity uses to highlight “high probability fraud anomalies”. But this time, I’m going to include all data points instead of gleaning just the ones that make my point. This is a chart of the ln of the absolute value of the percentage margin difference (margin difference in a vote update divided by the total votes cast in that state) vs. absolute value of the Biden/Trump ratio.